Step by Step - How to Run Advantage+ Campaigns on Meta using Andromeda in 2026

How Do I Run Advantage+ Campaigns Effectively?

Key Takeaways

Advantage+ campaigns are creative-driven systems.

Meta’s delivery engine increasingly relies on creative interaction signals to determine audience distribution, meaning creative variation instead of manually targeting using the Ad Set tab is the primary optimization lever.

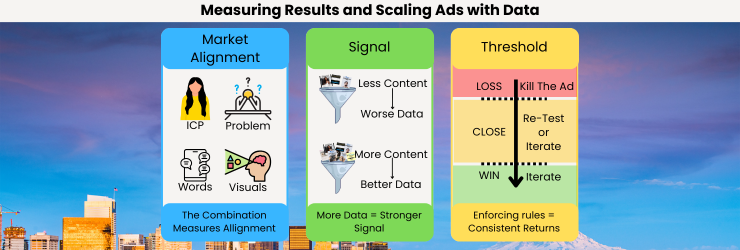

Structured testing determines whether Advantage+ scales profitably.

Without controlled variable testing and defined performance thresholds, Advantage+ campaigns simply amplify randomness rather than identifying scalable message-market alignment.

Creative capacity dictates your scaling ceiling.

Most advertisers assume budget determines scale, but in practice the number of validated creatives determines how far the system can expand before performance degrades. This is because of Creative Capacity, referring to the maximum budget one ad can sustain for your business.

Manual targeting inside Advantage+ should be used only for restrictions.

In modern Meta delivery systems (including Andromeda-powered optimization), ad set controls should primarily prevent ads from reaching disallowed audiences, not attempt to force targeting. Andromeda is the most advanced social media targeting system in the world, and is 10x better than any human. Just let it work.

If you Prefer to Watch this Article, Instead of Read it

We actually created a video version of this article that covers the same information. This article has more detail, but the video will give you everything you need to setup and run Advantage+ campaigns successfully.

Check it out!

Introduction

Most advertisers approach Advantage+ campaigns as a tactical feature rather than a structural system. They treat it as a simplified campaign type designed to automate targeting, assuming that success comes from selecting the correct settings inside the campaign interface. This interpretation misunderstands how Meta’s delivery infrastructure actually functions in 2026.

Advantage+ campaigns operate within a machine learning ecosystem that prioritizes behavioral signals generated through ad interactions. The Andromeda infrastructure powering Meta’s optimization systems aggregates engagement signals across massive datasets and uses them to identify statistically similar audiences. As a result, targeting is no longer the primary driver of advertising performance. Instead, creative interaction patterns determine distribution.

This shift explains why many advertisers struggle with Advantage+ campaigns. They attempt to manually engineer audience targeting or constrain campaigns with narrow parameters. When this occurs, the algorithm loses access to the interaction density required to identify high-conversion cohorts. The system becomes starved of signals and performance stagnates.

The other major mistake is just increasing budget to a "winning ad." This has been popularized heavily after 2021, where influencers on social media focused heavily on finding "winning products" and "winning ads" for dropshipping. This model was never very efficient, and in 2026 is entirely obsolete. The ad testing framework where you consistently test new creatives using SCALER is significantly more advanced, and effective.

The correct model, SCALER, treats Advantage+ as a signal amplification engine. The system expands reach based on the performance characteristics of creatives. When an ad demonstrates strong engagement, click-through behavior, and conversion signals, the algorithm systematically exposes it to increasingly similar users.

Running Advantage+ effectively therefore becomes a process of engineering signal density through structured creative testing. Instead of focusing on targeting variables, operators must focus on message-market alignment, creative capacity, and controlled iteration cycles.

The remainder of this article explains the mechanisms behind this system and outlines the structural framework required to operate Advantage+ campaigns profitably at scale. This is effectively a step-by-step tutorial to understanding the mechanisms behind this, so that you can efficiently setup an ad account and understand why something works, not just that it works.

This is important for getting Advantage+ campaigns to perform effectively because it aligns with Andromeda and Meta’s delivery system, which prioritizes creative interaction signals rather than manual audience targeting.

Common Structural Mistakes and Misconceptions

Despite the simplicity of Advantage+ campaign setup, several structural mistakes consistently undermine performance. I feel these should be addressed immediately because they conflict with the current working strategy with Meta.

Mistake 1: Attempting to Control Targeting

Many advertisers attempt to manipulate Advantage+ audience controls to “force” ads into specific demographic segments. This approach stems from legacy advertising models where targeting precision determined efficiency. If you are using the Ad Set tab to manually target, you are doing this.

However, in modern Meta systems, these controls restrict signal flow. When advertisers limit the distribution pool, the algorithm cannot identify emerging audience clusters. The result is slower learning, reduced reach, and unstable conversion rates.

The correct approach is to keep targeting broad and allow creative signals to drive audience discovery. Audience restrictions should only exist for compliance or brand safety purposes, for example, preventing ads from reaching underage users or restricted geographic regions.

Yes, this means your ad will sometimes be shown to the wrong person. But, only a few times. Andromeda learns incredibly fast. Trust that it will work as intended.

Mistake 2: Running Too Few Creatives

Another common failure occurs when advertisers launch Advantage+ campaigns with between 1 and 10 creatives. With such limited variation, the algorithm receives minimal signal diversity and struggles to identify scalable audience segments.

Effective campaigns require a steady flow of creative tests. Each creative introduces a new message, hook, or framing that can generate distinct engagement patterns. More creative variation produces more behavioral data, enabling the algorithm to map message-to-audience relationships.

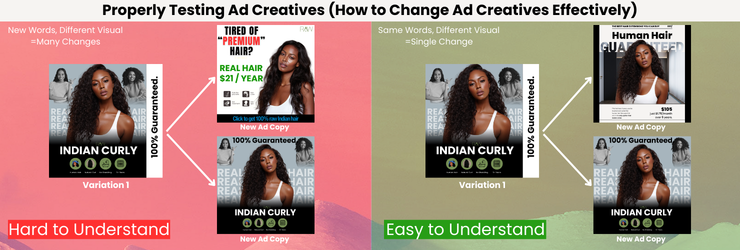

Mistake 3: Random Creative Testing

Some advertisers attempt to test creatives but fail to control variables. They change messaging, format, offer structure, and visual presentation simultaneously. When performance changes, they cannot determine which variable caused the improvement or decline.

The Affilicademy approach isolates variables during testing cycles. Each iteration introduces a specific message variation while keeping other factors consistent. This controlled testing structure allows operators to identify which problem framing or hook produces the strongest interaction signals.

Mistake 4: Budget Micromanagement

A frequent misconception concerns daily spend levels. Questions such as “Is $5 a day good for Facebook ads?” illustrate the misunderstanding. Budget size does not determine whether a campaign works. What matters is signal generation and learning velocity.

Small budgets simply slow the rate at which data accumulates. They do not inherently produce better or worse results. Campaigns must be evaluated based on conversion efficiency and interaction metrics rather than arbitrary daily spend thresholds.

Mistake 5: Misunderstanding Algorithm Triggers

Many marketers search for specific “words that trigger the Facebook algorithm.” In reality, the algorithm does not respond to keywords in isolation. It responds to engagement behavior. If a message resonates strongly with users experiencing a specific problem, engagement rises and the algorithm expands distribution accordingly.

Therefore, the focus should be on problem-first messaging rather than attempting to engineer algorithmic triggers.

This is important for getting Advantage+ campaigns to perform effectively because removing structural mistakes allows the algorithm to generate clean interaction signals and identify profitable audiences.

Why Most Advantage+ Campaigns Collapse at Scale

Campaigns that appear profitable at low spend frequently collapse when budgets increase. This outcome is commonly attributed to audience saturation or algorithm instability, but the underlying cause is usually creative capacity.

When a campaign launches, early conversions often come from highly responsive audience segments. These users represent the lowest resistance portion of the market. As spend increases, the algorithm must reach increasingly broader audiences.

If the creative volume remains unchanged, engagement rates begin to decline. Frequency increases, lower levels of awareness audiences fail to convert, and conversion efficiency deteriorates. The algorithm continues expanding distribution but does so with declining results.

This dynamic explains the common challenge when managing an advertising budget. Advertisers assume they can scale spending linearly while maintaining stable results. In reality, creative effectiveness declines as exposure increases.

The solution is not simply increasing budget or adjusting targeting. The solution is increasing the volume of new creatives tested at the same ratio that you increase budget.

I.E. if your initial budget is $100/day with 5 ads, and each one is performing well, see how much budget they can take without dropping ROAS. Lets say its $25 each. That is your creative capacity. If you want to run $1000/day in ads, you need to divide $1000 / $25 which is 40 ads. That is how many creatives you need running successfully to be able to spend $1000 at the same approximate ROAS.

This is important for getting Advantage+ campaigns to scale effectively because you must scale creative volume with budget, which maintains engagement signals as audience expansion occurs.

Step-by-Step Tutorial: Setting Up an Advantage+ Campaign the Correct Way

This tutorial walks through the full workflow used in the Affilicademy methodology: defining the problem, structuring the campaign correctly, building creatives using single-variable testing, and iterating based on performance.

Step 1: Define the ICP and the Core Problem

Before touching Ads Manager, define the ideal customer profile (ICP) and the single core problem the campaign will address.

Most advertising campaigns fail because they attempt to solve multiple problems simultaneously. When messaging becomes diluted, engagement signals weaken and the algorithm cannot identify a responsive audience cluster.

Instead, the campaign should revolve around one dominant problem experienced by the target customer.

For example:

Bad framing:

• Marketing for small businesses

• Lead generation

• Social media growth

Correct framing:

• Local businesses struggling to get leads from Instagram, who have tested organic marketing in the past and failed.

This creates a clear signal environment. When users experiencing that specific problem interact with the ad, Meta’s algorithm can identify similar users and expand distribution accordingly.

A campaign should never attempt to solve multiple problems simultaneously. Instead, run separate campaigns for separate problems.

This structure ensures the algorithm receives clean engagement signals tied to a specific pain point.

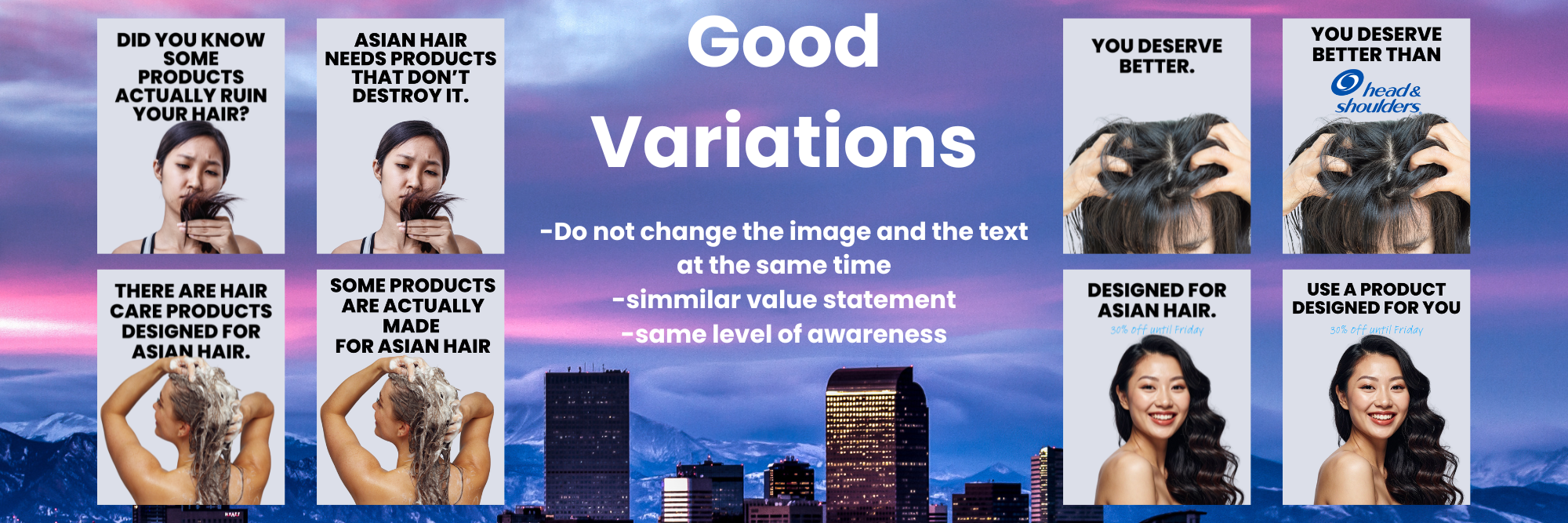

Step 2: Map the Creative Testing Matrix

Once the problem is defined, the next step is building a creative testing matrix.

The goal is not to randomly produce ads. The goal is to test different message angles around the same problem.

Each creative introduces a slightly different framing of the same issue.

For example, if the core problem is:

Businesses cannot get leads from Instagram.

Possible message angles include:

Problem awareness

Solution demonstration

Social proof

Statistic framing

Myth-breaking

Comparison framing

Along with many, many more. As seen below.

Each angle introduces a different psychological entry point for the audience.

Because the problem stays constant, the algorithm can clearly measure which message framing generates the strongest engagement signals.

The goal is to allow the algorithm to discover it through interaction data.

Step 3: Develop Creatives Using Single-Variable Testing

Creative development must follow the single-variable testing structure outlined in the Affilicademy iteration framework.

The rule is simple:

Each new creative changes only one variable.

This allows the operator to identify exactly what caused performance changes.

Variables that can be tested include:

Hook

Opening visual

Problem framing

Proof mechanism

Call to action

Example testing structure:

Creative 1

Hook A

Visual A

Body A

Creative 2

Hook B

Visual A

Body A

Creative 3

Hook C

Visual A

Body A

In this scenario, only the hook changes.

If Creative 2 dramatically outperforms Creative 1, the operator knows the hook created the performance improvement.

Testing multiple variables simultaneously makes the results impossible to interpret.

This structured testing is critical because the algorithm uses engagement signals to determine audience expansion. If signals are noisy or inconsistent, optimization becomes unreliable.

Step 4: Set Up the Advantage+ Campaign

Now that the messaging system and creatives are ready, the campaign can be configured.

Inside Ads Manager:

Create Campaign

Select Sales objective

Enable Advantage+ Shopping Campaign

This campaign type consolidates optimization signals into a single delivery system and allows Meta’s algorithm to determine audience targeting automatically.

Campaign setup guidelines:

Leave audience broad

Do not stack targeting layers

Do not restrict demographics unless required

Allow Advantage+ placements

Targeting restrictions should only be applied when legally necessary.

Examples include:

Age-restricted products

Regional restrictions

Compliance exclusions

Outside of those scenarios, audience controls should remain broad so the algorithm can discover responsive clusters.

Step 5: Upload the Creative Test Set

Inside the ad level, upload the creatives built during the testing phase.

A typical test batch includes:

10-30 creatives (Use SCALER to calculate how many ads to test)

Each testing one variable

All addressing the same problem

These creatives enter the system simultaneously and begin generating interaction signals.

During the early learning phase, Meta distributes impressions across all creatives while evaluating engagement patterns.

Step 6: Monitor Early Signal Indicators

Instead of focusing exclusively on conversions, early campaign evaluation should focus on engagement signals.

Signals the algorithm uses heavily include:

Click-through rate

Thumb-stop rate

Video watch time

Early conversion events

Creatives that generate strong engagement signals typically scale more effectively because the algorithm receives clearer interaction patterns.

Low-engagement creatives should be removed quickly to prevent signal dilution.

The objective is to maintain a pool of high-signal creatives inside the campaign.

Step 7: Apply Performance Threshold Filtering

Once sufficient data accumulates, operators begin filtering creatives based on performance thresholds.

Underperforming creatives are paused.

Winning creatives remain active.

This filtering process improves signal quality across the campaign.

The algorithm receives stronger engagement signals and begins expanding delivery toward higher-probability audiences.

This stage converts a chaotic creative test environment into a high-efficiency signal system.

Step 8: Start the Iteration Cycle

After identifying the first winners, the campaign enters the iteration phase.

New creatives are produced based on winning patterns.

For example:

If a statistic-based hook performs well, new creatives test additional statistics.

If testimonial framing performs well, additional testimonial creatives are introduced.

The goal is not to reinvent messaging.

The goal is to expand successful themes.

This iterative expansion increases creative capacity, which directly increases the campaign’s scaling ceiling.

Step 9: Reinvest Profits Into Creative Testing

The final stage of the system is profit-funded iteration.

When campaigns generate profitable results, reinvest a portion of the profit into additional creative testing.

This produces a reinforcing growth loop.

More creatives generate more signals.

More signals produce more conversions.

More conversions fund more creative production.

Over time, this process compounds.

Campaigns become more stable, reach expands, and acquisition costs decline due to stronger message-market alignment.

If you Want Ads Created For you, For Free, on a Performance Basis

That’s exactly what we built Affilicademy to solve.

We work with brands to create a social media acquisition system powered by affiliate content creators, where a network of creators produces ads for your brand and feeds them directly into structured testing campaigns like the framework outlined in this article. (Because we wrote this article based on what works)

To make it even easier to test the system, we’re currently offering:

A free trial where I will setup and manage your ads for free - then,

Performance-based advertising where you only pay when ads convert

Access to the same structured testing and iteration framework outlined in this guide

If you want a system where ads are continuously produced, tested, and scaled without relying on guesswork, this model was built specifically for that.

If you want to see how it works for your brand, apply to work with Affilicademy and we’ll walk you through how the creator-driven acquisition system operates.

https://affilicademy.com/10freeugc

Strategic Synthesis

Advantage+ campaigns are often misunderstood because advertisers focus on the visible campaign interface rather than the invisible optimization system behind it.

The advertisers who consistently succeed with Advantage+ treat advertising as an engineering process. They design campaigns around controlled testing structures, enforce performance thresholds, and maintain continuous creative iteration.

Operators who understand this dynamic treat scaling as a capacity challenge. They expand creative production, introduce new messaging angles, and allow the algorithm to discover new audience segments.

The result is a compounding system where creative capacity drives signal density, signal density drives audience discovery, and audience discovery drives profitable growth.

FAQ

What is the Advantage+ audience?

Advantage+ audience allows Meta’s algorithm to determine targeting based on interaction signals rather than manual demographic selection. The system analyzes engagement patterns to identify users statistically likely to convert.

Advantage+ Shopping campaign vs manual campaigns — which is better?

Advantage+ Shopping campaigns generally perform better for acquisition because they consolidate optimization signals into a single learning system. Manual campaigns remain useful when strict audience segmentation or exclusions are required.

Is $5 a day good for Facebook ads?

Budget size does not determine campaign effectiveness. Small budgets simply slow down data collection and learning speed. Performance should be evaluated using engagement signals and conversion efficiency rather than daily spend.

What words trigger the Facebook algorithm?

The algorithm does not respond to specific words. It responds to engagement signals generated when messaging resonates with the audience’s problem. Strong problem-aligned messaging produces higher interaction rates and better distribution.

What is the biggest challenge when managing an advertising budget?

The biggest challenge is scaling spend faster than creative capacity. Without a continuous pipeline of new creatives, campaigns experience fatigue and declining hit rates as budgets increase.